|

Overall, S3 is a powerful and flexible tool that enables businesses to store and manage their data in a secure and scalable way, making it an essential component of many cloud-based applications and services.

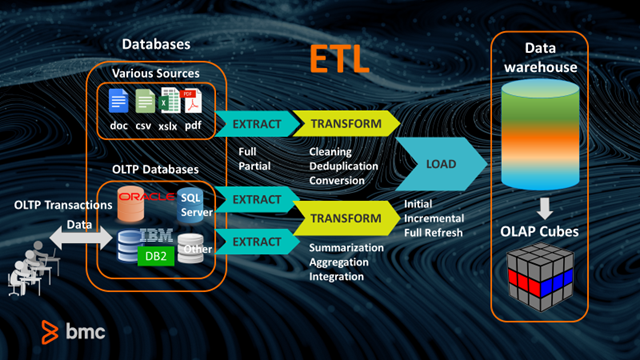

It also provides high availability and durability, ensuring that data is always accessible and protected against data loss. The following example Python script prints both the original data from the S3 bucket and the transformed data from the S3 Object Lambda Access Point. S3 also offers a range of features, including versioning, lifecycle policies, and access control, which allow users to manage their data effectively. These objects can be accessed through a web interface or through APIs, making it easy to integrate with other AWS services or third-party applications. S3 allows users to store and retrieve data in the form of objects, which can be up to 5 terabytes in size. What is ETL Pipeline ETL (Extract, Transform, and Load) Pipeline involves data extraction from multiple sources like transaction databases, APIs, or other business systems, transforming it, and loading it into a cloud-hosted database or a cloud data warehouse for deeper analytics and business intelligence. Batch processing is a sort of collecting data and learn more about how to explore a type of batch processing called Extract, Transform, and Load. S3 is highly scalable, secure, and durable, making it an ideal solution for businesses of all sizes.

It is designed to store and retrieve any amount of data from anywhere on the web. This typically involves loading data from disparate sources, transforming or enriching it, and storing the curated data in a data warehouse for consumption by different users or systems.

Apache Airflow is a tool for data orchestration. Data orchestration typically involves a combination of technologies such as data integration tools and data warehouses. Amazon S3 (Simple Storage Service) is a cloud-based object storage service provided by Amazon Web Services (AWS). ETL extract for Extract Transform and Load. ETL (Extract-Transform-Load) processes are an essential component of any data analytics program. Data Orchestration involves using different tools and technologies together to extract, transform, and load (ETL) data from multiple sources into a central repository.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed